Recent News

-

A Johns Hopkins study finds that large language models are more likely to generate irrelevant or harmful responses when operating in underrepresented languages.

-

New faculty Q&A: Eric Nalisnick

CategoriesGet to know Eric Nalisnick, who joins Johns Hopkins as an assistant professor of computer science.

-

The Nexus Awards Program supports a diverse range of programming, research, and teaching at the university’s Bloomberg Center at 555 Pennsylvania Ave.

-

Yinzhi Cao explains what happens when a bot is “jailbroken” and how large language models can be built to be less susceptible to such hacks.

-

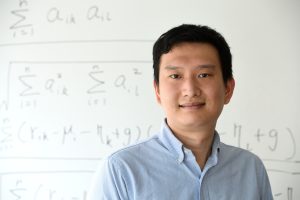

Signs of change

CategoriesPhD student Xuan Zhang is researching sign language recognition and translation tasks with the aim of developing a large vision-language model capable of translating signed words into spoken language.

-

The third-year computer science student received a Provost’s Undergraduate Research Award to train machine learning models to distinguish between different types of heart failure.

-

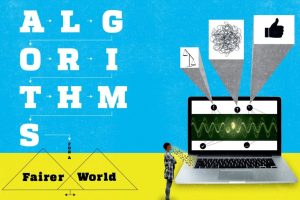

Algorithms for a fairer world

CategoriesMachine learning technologies hold the potential to revolutionize decision-making. But how can we ensure AI systems are free of bias? Our experts weigh in.

-

In AI we trust?

CategoriesGiven AI models’ ubiquity and influence, how and why should we trust their decisions? Can we be certain their predictions are free of biases or errors? Johns Hopkins AI experts discuss.